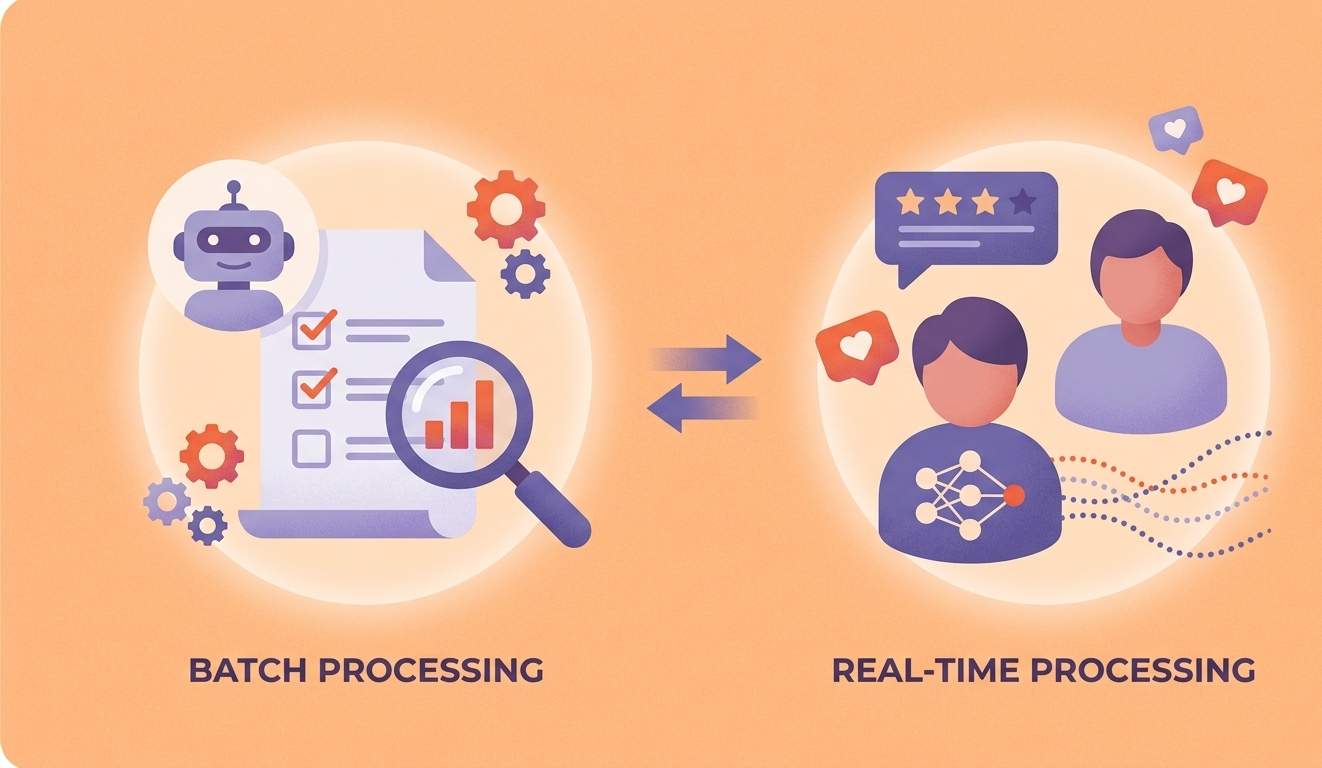

Batch vs Real-Time ML Processing

The timing of your ML predictions matters as much as the predictions themselves. Choose the right processing architecture.

Machine learning systems process data in two fundamental modes: batch (offline, scheduled) and real-time (online, on-demand). Batch processing runs predictions on large datasets at scheduled intervals — ideal for analytics, recommendations, and reporting. Real-time processing generates predictions on individual data points as they arrive — essential for fraud detection, personalization, and interactive applications. Many production systems use both, and choosing the right mix is a critical architectural decision.

TL;DR

Use batch processing for pre-computed predictions, analytics, and workloads where latency tolerance is minutes to hours. Use real-time processing for user-facing interactions, fraud detection, and decisions that require immediate response. Most production ML systems benefit from a combination of both.

Overview

Batch ML Processing

Scheduled, offline prediction runs over large datasets. Predictions are computed in bulk, stored in a database, and served when needed. Common for recommendation engines, risk scoring, and business intelligence.

Real-Time ML Processing

On-demand inference that generates predictions as individual requests arrive. Low-latency model serving for interactive applications, real-time decisions, and streaming data processing.

Head-to-Head Comparison

How Batch ML Processing and Real-Time ML Processing stack up across key criteria.

| Criteria | Batch ML Processing | Real-Time ML Processing |

|---|---|---|

| Latency | Minutes to hours between data arrival and prediction availability | Winner Milliseconds to seconds for individual predictions |

| Throughput | Winner Optimized for processing millions of records efficiently | Handles individual requests; throughput limited by infrastructure |

| Infrastructure Cost | Winner Compute runs only during scheduled windows; spot instances viable | Always-on inference servers required; higher baseline costs |

| Data Freshness | Predictions based on data as of last batch run | Winner Predictions use the most current data and features |

| Implementation Complexity | Winner Simpler architecture with scheduled jobs and storage | Requires model serving, feature stores, and monitoring infrastructure |

| Feature Engineering | Winner Can use complex, computationally expensive features | Features must be computed in real-time or pre-cached in feature stores |

| Error Recovery | Winner Rerun the entire batch job if errors occur; idempotent | Errors affect individual requests; requires circuit breakers and fallbacks |

| Model Complexity | Winner No latency constraints — use the most complex, accurate model | Model size and complexity limited by latency requirements |

Latency

Minutes to hours between data arrival and prediction availability

Milliseconds to seconds for individual predictions

Throughput

Optimized for processing millions of records efficiently

Handles individual requests; throughput limited by infrastructure

Infrastructure Cost

Compute runs only during scheduled windows; spot instances viable

Always-on inference servers required; higher baseline costs

Data Freshness

Predictions based on data as of last batch run

Predictions use the most current data and features

Implementation Complexity

Simpler architecture with scheduled jobs and storage

Requires model serving, feature stores, and monitoring infrastructure

Feature Engineering

Can use complex, computationally expensive features

Features must be computed in real-time or pre-cached in feature stores

Error Recovery

Rerun the entire batch job if errors occur; idempotent

Errors affect individual requests; requires circuit breakers and fallbacks

Model Complexity

No latency constraints — use the most complex, accurate model

Model size and complexity limited by latency requirements

When to Use Each

Use Batch ML Processing when...

- Predictions can be pre-computed (recommendations, risk scores, segments)

- Your data updates on a schedule (daily reports, nightly data loads)

- You need complex models without latency constraints

- Cost optimization is a priority and you can use spot/preemptible instances

- The prediction use case tolerates minutes-to-hours staleness

Use Real-Time ML Processing when...

- User-facing applications require instant predictions (search ranking, personalization)

- Decisions must be made at the point of transaction (fraud detection, pricing)

- Data arrives as a continuous stream rather than in scheduled batches

- The value of a prediction degrades quickly with staleness

- Interactive applications depend on ML predictions in the request path

Our Recommendation

Most production ML systems use a lambda or kappa architecture that combines both approaches. Pre-compute what you can in batch to reduce real-time infrastructure costs, and serve real-time predictions only where freshness is essential. WebbyButter designs ML architectures that optimize the batch-vs-realtime boundary for your specific latency and cost requirements.

Optimize Your ML Pipeline for Speed and Scale

Choose the right processing architecture to balance low-latency requirements with computational efficiency.

Real-time inference systems for instant predictions and user feedback. High-throughput batch processing for large-scale data analysis. Streaming analytics integration for continuous model improvement.

Frequently Asked Questions

Explore More

Related Resources

rag-systems for healthcare

Purpose-built rag systems solutions designed for the unique challenges of healthcare. We combine deep healthcare domain ...

Learn moreai-chatbots for healthcare

Purpose-built ai chatbots solutions designed for the unique challenges of healthcare. We combine deep healthcare domain ...

Learn moreAI Project Cost Calculator

Get a realistic estimate for your AI project based on type, complexity, team size, and timeline. No guesswork — just dat...

Learn moreArchitect Your ML Processing Pipeline

Whether batch, real-time, or both, our ML engineers design and deploy processing architectures optimized for your latency requirements and cost constraints.